Overview

NSX ALB (previously known as Avi) offers rich capabilities for L4-L7 load balancing across different clouds and for different workloads. NSX ALB can be configured to L4-L7 load balance across containers workloads by means of deploying an ingress controller which is known as AKO (Avi Kubernetes Operator) and leverage the standard Ingress API in Tanzu or K8s clusters.

The AKO deployment consists of the following components:

- The NSX ALB Controller

- The Service Engines (SE) –> Actual Load Balancers

- The Avi Kubernetes Operator (AKO) –> Ingress Controller

What is Ingress Controller and Ingress & Why?!

By default, when you spin up a pod no external access is allowed to the containers application running inside that pod, to allow external access to pods you need to expose those pods using a Service.

There are 3 service types available in Kubernetes:

- ClusterIP

- NodePort

- Loadbalancer

The first type which is the default is ClusterIP and this allows pod access from within the kubernetes cluster, while NodePort exposes pods ports on the hosting nodes interface and maps container ports to a local host port, users can then access containers using nodeport ip and port combination.

Last type which is Loadbalancer can be used in combination with external cloud providers K8s load balancers offered by Amazon, Azure or GCP.

So where the hell does Ingress fit in and is it needed to access applications running in containers in pods?!

absolutely not, the kubernetes nodeport service mentioned above has a load distribution capability in itself and can distribute incoming traffic (only round robin) to all endpoints (containers) running, in other words once a nodeport service is configured, users can access containers using any node IP on which the pod is running.

Why Ingress?!

Ingress allows users to define complex http/https rules routing criteria based on which the incoming traffic will be routed to a specific container running our application.

Ingress exposes HTTP and HTTPS routes from outside the cluster to services within the cluster. Traffic routing is controlled by rules defined on the Ingress resource. (https://kubernetes.io/docs/concepts/services-networking/ingress/)

It is important to highlight that Ingress does not expose any container access, so a backend service (one of the mentioned above) needs to be configured before configuring Ingress.

What is an Ingress Controller?!

An ingress controller is the actual resource that performs the intelligent (l4-l7) load balancing function in the K8s cluster, which in our case is NSX ALB AKO, while the ingress is simply the API created object that will communicate the routing rules to the controller.

Once we deploy NSX ALB AKO on our Tanzu/K8s cluster, it will communicate to the NSX ALB controller and start deploying a load balancer group (SE group) per Tanzu/K8s cluster.

Lab Inventory

For software versions I used the following:

- VMware ESXi 7.0U3d

- vCenter server version 7.0U3

- TrueNAS 12.0-U7 used to provision NFS datastores to ESXi hosts.

- VyOS 1.4 used as lab backbone router and DHCP server.

- Ubuntu 20.04 LTS as bootstrap machine.

- Ubuntu 20.04.2 LTS as DNS and internet gateway.

- Windows Server 2012 R2 Datacenter as management host for UI access.

- Tanzu Community Edition version 0.11.0

- NSX ALB controller 21.1.4

For virtual hosts and appliances sizing I used the following specs:

- 3 x virtualised ESXi hosts each with 8 vCPUs, 4 x NICs and 32 GB RAM.

- vCenter server appliance with 2 vCPU and 24 GB RAM.

Prerequisites

We need to ensure that we have the following components installed and running before we proceed:

- Tanzu workload cluster (check my blog post here to learn how)

- A linux machine (bootstrap) having kubectl, tanzu cli and helm installed.

- NSX ALB controller deployed and connected to a vCenter cloud instance.

- IPAM profile configured on the NSX ALB.

NSX ALB configuration

I decided to configure my NSX ALB controller with the minimal requirements to integrate with AKO so that I can show you that AKO will handle all the ALB objects creation needed to load balance traffic inside our Tanzu cluster. AKO will create and deploy the required VIPs, Virtual Servers and SEs once it is properly configured in a Tanzu/K8s cluster and connected to an ALB controller.

Step 1: Converting Default-Cloud to vCenter server

I will be using the default cloud instance in ALB just for simplicity but I would recommend creating a new cloud for production.

In ALB GUI navigate to Infrastructure > Clouds and then edit the default cloud parameters as below:

Note: We will be creating the IPAM profile in the next step and add it to the Default-Cloud parameters above. IPAM is a must so that AKO can assign VIPs to every ingress created. DNS profile is however will be ignored in this blog post as I will manually be adding DNS entries of ingress FQDNs to my DNS.

The last step in the cloud conversion wis where we define the vcenter port group on which the SEs management interfaces will be attached to. We will also need to define either a DHCP pool in this subnet or static IP pool (as in my case) from which ALB can assign management IPs to SEs.

Step 2: Create IPAM profile

In the NSX ALB GUI, navigate to Templates and under Profiles click on IPAM/DNS Profiles and then click on create and create an IPAM with similar configuration to the below:

The usable network is pulled from the vcenter to which we are connected to in step 1, this is a port group which ALB will use to assign IP addresses to VIPs, this is very important.

Once you create your IPAM profile, go back to step 1 and add it to the IPAM profile section highlighted.

Step 3: Create an IP pool for VIP assignment

This step is a completion for step 2, because we need to configure an IP range from which the IPAM profile will use. To do this, navigate to Infrastructure > expand Cloud Resources > Networks and then select the network which you choose as usable network under your IPAM (in my case it is called ALB-SE-VIPs).

As you can see I have already defined an IP Pool for this network, if you do not have one configured then click on the pen icon to the right of the network and add an IP pool similar to the below:

At this point we are done with the NSX ALB configuration, next is AKO deployment.

Installing and configuring AKO

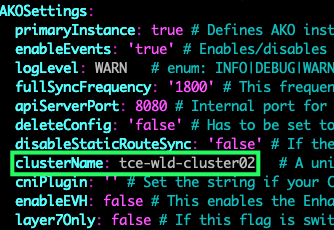

For this step we need to have a Tanzu or K8s cluster with master (control-plane) and worker nodes up and running, in my lab I am using Tanzu Community Edition and will be using one of my TCE clusters called tce-wld-cluster02 which has 2 worker nodes.

There are different ways to deploy AKO, the easiest and the most straight-forward IMO is using helm charts. If you do not have helm installed on your bootstrap machine then you can install it by following Helm docs.

Step 1: Add the VMware AKO repo to your helm

helm repo add ako https://projects.registry.vmware.com/chartrepo/akoStep 2: Search the added repo to list all the available AKO images

helm search repo |grep ako

I will be using AKO version 1.7.2 and for that we need to pull the correct values.yaml file, this is the configuration parameters file which AKO will use to connect to and configure objects in NSX ALB.

Step 3: pull & edit the values.yaml for the selected AKO version

helm show values ako/ako --version 1.7.2 > ako-values.yamlThe above command will create a file called ako-values.yaml with all the needed parameters to connect AKO to your NSX ALB controller, below is snippets from the most important sections:

Save and exit the values file.

Step 3: Deploy AKO Pod

Create a namespace under which AKO will be deploying AKO pod:

kubectl create namespace avi-ako-systemFrom the command prompt, run the following command to start the AKO deployment process

helm install ako/ako --generate-name --version 1.7.2 -f ako-values.yaml --set ControllerSettings.controllerHost=172.10.80.1 --set avicredentials.username=admin --set avicredentials.password=<password> --namespace=avi-ako-systemTesting Ingress on a test Nginx web server deployment

For this we need to create a test deployment, a nodeport service to expose the deployment and an ingress service to test AKO.

You can copy and paste the below in a yaml file to roll-out the whole thing in one-step using the command “kubectl apply -f <filename.yaml>“

apiVersion: apps/v1

kind: Deployment

metadata:

annotations:

deployment.kubernetes.io/revision: "3"

generation: 3

labels:

app: ingress-ako-web

name: ingress-ako-web

namespace: default

spec:

replicas: 2

selector:

matchLabels:

app: ingress-ako-web

template:

metadata:

labels:

app: ingress-ako-web

spec:

containers:

- image: quay.io/jitesoft/nginx

imagePullPolicy: Always

name: nginx

ports:

- containerPort: 80

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

name: nodeport-web-deployment

spec:

type: NodePort

selector:

app: ingress-ako-web

ports:

- port: 80

targetPort: 80

nodePort: 30007

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: chocomel-ingress

spec:

rules:

- host: ingress.corp.local

http:

paths:

- pathType: Prefix

path: /

backend:

service:

name: nodeport-web-deployment

port:

number: 80

Verify ALB config and testing access to Nginx webserver over Ingress

last step is to test access using HTTP to our nginx deployment using ingress URL shown above (ingress.corp.local).

Please note, I have added an entry in my DNS point ingress.corp.local to the VIP IP assigned by NSX ALB to my ingress created above.

Navigate to NSX ALB GUI and check that AKO has created the SEs, a VIP and virutal service pointing to our backend service:

The 172.10.20.0/24 subnet is my Tanzu Cluster worker nodes and the port 30007 is the nodeport configured in the yaml sample under the service definition to expose nginx containers on that port on worker nodes.

From my Windows Jumpbox I will http to the ingress url http://ingress.corp.local, this should direct me to the nginx welcome page:

Pingback: Deploying Tanzu Kubernetes Grid (TKGm) clusters with NSX ALB (Avi) - NSXBaas

Pingback: Deploying NSX NAPP on upstream (a.k.a native) Kubernetes – Part I - nsxbaas

Pingback: Insight into positioning NSX ALB (Avi) with VMware Tanzu Offerings - nsxbaas