Overview

In part two of my blog series covering Kubernetes/Tanzu as a service using cloud director and CSE 4.0, I will continue the deployment workflow started in part one, the workflows covered in part two will include NSX ALB integration with Cloud Director and eventually deploying a Tanzu cluster inside Tenant Pindakaas which we created in part one.

Bill of Materials

For software versions I used the following:

-

- VMware ESXi 7.0U3g

- vCenter server version 7.0U3g

- VMware NSX-T 3.2.2

- VMware Cloud Director 10.3.3

- VMware NSX Advanced Load Balancer 21.1.3

- TrueNAS 12.0-U7 used to provision NFS data stores to ESXi hosts.

- VyOS 1.4 used as lab backbone router and DHCP server.

- Ubuntu 20.04.2 LTS as DNS and internet gateway.

- Ubuntu 18.04 LTS as Jumpbox and running kubectl to manage Tanzu clusters.

- Windows 10 pro as Jumpbox with UI.

For virtual hosts and appliances sizing I used the following specs:

-

- 3 x ESXi hosts each with 12 vCPUs, 2 x NICs and 128 GB RAM.

- vCenter server appliance with 2 vCPU and 24 GB RAM.

- NSX-T Manager medium deployment.

- NSX-T Edges with large form factor VM.

- Cloud Director large primary cell.

Prerequisites and preparations

Revise part one of this blog series for a list of prerequisites needed before you can continue with the steps mentioned in part one and two.

Reference Architecture

A very high level of Tanzu as a service offering with Cloud Director and CSE4.0 is summarised in the diagram below, the idea is to deploy CSE4.0 vApp which interacts with Cloud Director APIs to provide the capability to provision Tanzu Guest Clusters (TKGm) per tenant. Cloud Director provider will provide underlying networking and load balancing requirements in either per tenant dedicated or shared across tenants, while every tenant will be responsible of its own TKGm cluster deployment. Those TKGm clusters are bootstraped by means of an ephemeral temporarily VM which is created by the CSE extension and will be deleted automatically once the Tanzu cluster is up and running. There is no need to deploy Tanzu management cluster per tenant. Container Service Extension server will be deployed in its own provider managed Org and will need to have connectivity to Cloud Director only (no internet connectivity required). A detailed reference architecture which I used in my home lab and will be referenced in this blog post can be seen below:

Couple of remarks regarding the above architectural design:

- NSX ALB controller(s) are omitted from the above diagram since they are deployed in my home lab management network, they must be of course reachable to Avi SE mgmt T1 via Toasti T0.

- Avi SE mgmt T1 is a dedicated T1 gateway connected to a dedicated T0 gateway only for Avi service engines.

- Default Service Subnet (192.168.255.0/25) is system provisioned subnet to which Avi service engine data interfaces will be connected to. This subnet will also be connected to every Tenant Edge gateway (in case of dedicated tenant edge) or shared among all tenants if all tenants are sharing the same edge gateway.

- Tenant Edge gateway (example Pindakaas-EDGE, HARIBO-EDGE and CSE-EDGE) is a T1 router that Cloud Director will create on NSX-T backing the infrastructure for every tenant, and this tenant edge (T1) will act as the gateway for tenants routed networks.

- External Networks (192.169.100.0/24 pool) external network is an uplink from an edge gateway (T1) to the provider gateway (NSXBaaS GW). You will need to assign a group of IPs from that pool to every tenant edge gateway and will also need to add a NATing rule on every edge gateway to NAT any internal network to an address in the external pool so that VDC networks can reach external network resources (Cloud Director address and the internet). More about external networks can be found in VMware documentation.

- The CSE Server Org is a provider managed org, in which CSE vApp needs to be created. CSE 4.0 Server vApp requires access to Cloud Director API (cloud director IP) and does not require internet access. CSE server vApp is connected to an internal VDC routed network 172.100.100.0/24 which is NATed through CSE-EDGE gateway to an address from the EXT network pool (see green EXT network pool).

- TKG-Nodes-NET (10.210.0.0/24 for Pindakaas and 10.200.0.0/24 for Haribo) is an internal routed network that will need to be created in every tenant and this is where Tanzu nodes and ephemeral VM will be connected to. This network needs to have internet access and access to cloud director IP address. Important note, currently this network supports only static IP pool allocation and no DHCP.

Deployment Workflow

The deployment workflow is broken down to 4 major steps:

- Onboarding a test tenant (Pindakaas) with relevant networking configuration (Covered in Part I of this blog post series).

- CSE 4.0 server preparations and deployment. (Covered in Part I of this blog post series).

- VMware NSX Advanced Load Balancer integration with Cloud Director (Covered in Part II of this blog post series).

- Enabling Tenants to deploy Tanzu Kubernetes Cluster on Cloud Director (Covered in Part II of this blog post series).

VMware NSX ALB integration with Cloud Director

Cloud Director version 10.2 offered native integration with NSX ALB (Avi) to offer load balancing capability across tenants workloads. The integration requires Cloud Director networking to be backed by NSX-T and to have NSX-T added as a cloud provider in NSX ALB. For service providers to offer Tanzu or native Kubernetes as a service using Cloud Director and CSE, they need to utilise NSX ALB (Avi) as load balancer for Tanzu/Kubernetes clusters.

Step 1: Prepare NSX-T for integration with NSX ALB

In high-level as per the above architecture diagram, the following configuration needs to be completed on NSX-T before NSX can be added as cloud provider in NSX ALB

- Dedicated Tier-0 Logical Router for AVI Service Engines (Recommended but not a must, in my setup I use a single T0 for my NSX setup).

- Tier-1 gateway (in my setup it is called Avi SE mgmt) attached it to the Tier-0 created in the first step, this will be the default gateway for Avi service engines.

- A DHCP enabled logical Segment (in my setup is called Avi SE mgmt) for AVI SE Management interfaces connected to Tier-1 created in step 2. IPs defined in the DHCP scope will be assigned to Deployed Service Engines management interfaces. Configuring local segment DHCP server can be reviewed in VMware docs.

From my NSX-T manager, the network topology for service engine management network connectivity is shown below:

Step 2: Add NSX-T instance to Avi as Cloud Provider

If you do not have already an NSX-T cloud instance integrated with NSX ALB then we need to add one (the same one we use with our Cloud Director instance). Before we can add an NSX-T Cloud we need to create two user defined credentials in NSX ALB, one for the user to use to authenticate with NSX and another one to authenticate to a vCenter instance where NSX ALB will use to deploy service engines. To create custom user credentials, from NSX ALB UI navigate to Administration and then under User Credentials click on CREATE to create two user credentials as explained above.

Repeat the same for vcenter user and when done you should see screen similar to the below

Now we need to add our NSX-T instance as NSX-T cloud in NSX ALB, to do that navigate to Infrastructure > Clouds click on CREATE and choose NSX-T Cloud

Fill in your NSX-T and vCenter information and use the credentials we created for both instances. Important note, make sure to add NSX-T either by an IP address or FQDN exactly as you previously added it in Cloud Director so if your NSX-T is added in Cloud Director by its FQDN then you have to add it by FQDN also in NSX ALB, otherwise Cloud Director will not be able to pull NSX-T relates information from NSX ALB at later step.

In my setup I also checked the DHCP check box, since I configured my NSX-T segment to which Avi service engine will be attached to use DHCP.

We need then to specify which NSX T1 GW and L2 segment to use for service engine management interface connectivity, both T1 and segment has been pre-configured in NSX-T. For the data network T1 and overlay segment I used for service engine are “dummy” objects i.e. will not really be used, this is needed because we cannot save the NSX-T Cloud addition workflow without specifying a T1 and segment for SE data network, however in integration with Cloud Director, the layer 2 segment will be automatically created by NSX ALB (192.168.255.0/25) as shown in the reference architecture and this will happen when we enable load balancer service on our Pindakaas tenant. So this is a “workaround” in order to be able to finalise addition of NSX-T as Cloud in Avi, but as mentioned it will be corrected later by Cloud Director.

In the next section add your vCenter instance and then click SAVE.

NSX ALB will take couple of seconds to add and sync info with NSX-T cloud and when done then you should see the status circle turned green

As last step, I created a service engine group Pindakaas-SE-Group under my newly created NSX-T cloud (but you can the default SE group)

Step 3: Add NSX ALB as Load Balancer in Cloud Director and enable Load balancer service on Tenant Pindakaas

Login to Cloud Director provider portal and click on Resources > Infrastructure Resources and under NSX-ALB click on Controllers and then click on ADD

Fill in your ALB controller information (make sure that you have Enterprise license) and then click on SAVE

Note, you might need to generate a new self-signed SSL certificate for your Avi controller (if you are not using a CA signed SSL certs) if cloud director failed to add your controller, on how to generate a new self-signed Avi controller certificate you can review one of my previous blog posts HERE.

Once our NSX ALB controller is added, we need to add our NSX-T instance also to Cloud Director, from same window click on NSX-T Clouds and then on ADD

You should see your NSX-T cloud added

Next we need to add our Service Engine group which we defined under NSX ALB NSX-T cloud, Click on Service Engine Groups and then click on ADD

Then navigate to Cloud Resources > Edge Gateways and click on Pindakaas-EDGE, then from left pane under Load Balancer click on General Settings move slider to activate load balancer state and click SAVE

Next I need to add a service engine group from the newly added NSX ALB to cloud director and then assign it to edge gateway serving my Tenant Pindakaas, click on Service Engine Groups and then click on ADD this will open a window where we need to select the name of our newly added NSX ALB controller and select our Pindakaas-SE-Group created in the previous step:

You should see similar screen to below

If you navigate to NSX-T you should be able to see a system created segment with subnet 192.168.255.0/25 and attached to Pindakaas Edge gateway T1 router

If you navigate now back to your NSX ALB UI and check the NSX-T Cloud settings you should see that NSX ALB has added the correct Edge Gateway and segment for service engine placement, make sure to remove the Dummy T1 and segment we used earlier

By this we have prepared our Cloud Director instance with NSX ALB and NSX-T cloud integration, and enabled load balancer capability on our tenant Pindakaas Edge gateway. With that we are all set to deploy our Tanzu TKGm cluster inside tenant Pindakaas and conclude the last step in the deployment workflow.

Enabling Tenants to deploy Tanzu Kubernetes Cluster on Cloud Director

Step 1: Create an organisation administrator user with Kubernetes rights

To allow organisation (tenants) users to create Tanzu clusters within tenant VDC we need to create an Org user and assign the below roles to it

Also make sure to publish the following Rights Bundles to your organisations under which Tanzu/Kubernetes clusters can be created

Make sure to add the right Preserve All ExtraConfig Elements During OVF Import and Export is added to both Org Admin role and the right bundle published to the tenants.

In my setup, I created a user called nsxbaas which will be an organisation admin for tenant Pindakaas with Kubernetes rights and I will use nsxbaas to create my TKGm cluster. For more details about how to create an Org user with appropriate Tanzu rights and roles you can refer to one of my previous blog post HERE.

Step 2: Deploy a Tanzu Kubernetes Cluster to an Org VDC

Login to your tenant portal using the above user and navigate to More > Kubernetes Container Clusters

Click on NEW and then you should be able to see VMware Tanzu Kubernetes Grid appearing as an available Kubernetes Provider

Set cluster name and pick a TKG template to use for your cluster (this is the OVA images we uploaded earlier to our shared catalog).

Choose which vApp network to connect Tanzu nodes to, remember this network needs to have internet connectivity.

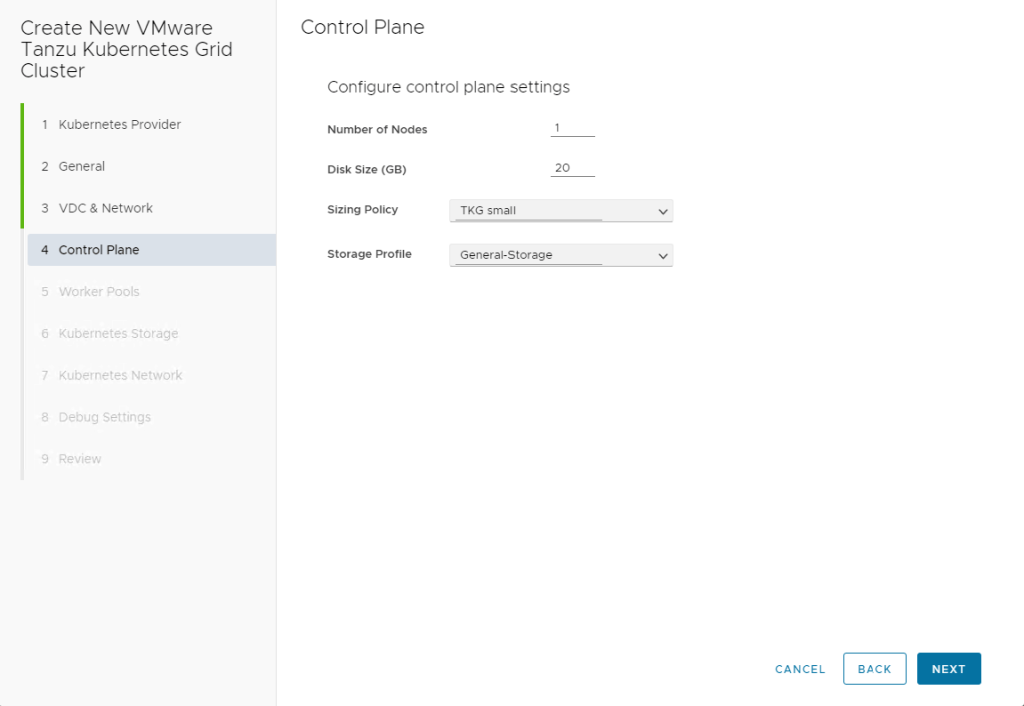

Set the number of control plan nodes and then click NEXT

Set the number of Worker nodes and create any additional worker pools if needed.

Create a default storage class to be used for your Tanzu clusters inside that tenant and then click NEXT

Set your Pods CIDR (this will be used by Antrea CNI to assign IPs for pods) and define your services CIDR (for Kubernetes cluster services such as cluster service IPs)

If you enable Auto Repair, cluster creation process will not try to resolve any errors if encountered to give a chance to manually troubleshoot the issue, if Auto Repair is enabled Tanzu creation workflow will restart cluster creation if any errors encountered to try and resolve it.

Review workflow parameters and if all good then click on FINISH.

The workflow of Tanzu cluster provisioning is lengthy process as it includes the following steps:

- Creating a vApp for Cluster deployment

- Creating and configuring a temporarily bootstrap VM (Ephemenral VM) in which a KinD cluster is created which eventually deploys Tanzu cluster nodes.

- Service Engines are deployed and corresponding virtual service is provisioned for every Tanzu cluster.

- Tanzu/Kubernetes cluster configuration is finalised across control and worker nodes.

- Cleanup phase in which Ephemeral VM is deleted.

In my setup this took me something between 30 to 45 minutes so be patient. After successful deployment you should see your clusters listed with status Available

Step 3: Verify Tanzu Cluster deployment

From vCenter you can verify that control and worker nodes VMs are successfully deployed and running along with Avi service engines:

To login to our TKG cluster, navigate back to tenant portal and click on More > Kubernetes Containers Clusters then click on our cluster pindakaas-tkgcluster01 and then DOWNLOAD KUBE CONFIG

This will download the kube configuration file for our cluster, copy this to a machine with kubectl installed and run the following command to verify cluster status and start deploying workloads

By this we have come to the end of this long (but hopefully useful) blog post series, follow my blog or my social media channels to stay updated about my work.

Cheers,