Overview

With the release of vSphere 8, VMware introduced Tanzu Kubernetes Grid clusters version 2, with TKG 2 you can integrate the supervisor cluster with an external identity provider via OpenID Connect (which utilises OAuth2.0 for client authentication) which allows organisations to use separate users and groups for their developers who need to deploy and work on Tanzu workload clusters. This segregation allows customers to use different identity provider for their vSphere environment administration (LDAP, AD, SSO and so on.) and OIDC identity providers such as Workspace ONE, Okta or Dex for their developers access.

vSphere 8 with Tanzu supervisor cluster leverages Project Pinniped to implement OIDC integration with external providers. Pinniped is an authentication service for Kubernetes clusters which allows you to bring your own OIDC, LDAP, or Active Directory identity provider to act as the source of user identities. A user’s identity in the external identity provider becomes their identity in Kubernetes. All other aspects of Kubernetes that are sensitive to identity, such as authorisation policies and audit logging, are then based on the user identities from your identity provider. A major advantage of using an external identity provider to authenticate user access to your Tanzu/Kubernetes clusters is that Kubeconfig files will not contain any specific user identity or credentials, so they can be safely shared.

In VMware implementation of Pinniped service within vSphere with Tanzu supervisor cluster, You do not (and cannot) need to directly create or configure Pinniped CRDs or pods in supervisor cluster as this will be created for you once you configure your identity provider from workload management interface in vCenter.

vSphere 8 with Tanzu supports the following identity providers:

- VMware WorkspaceONE Access

- Okta

- DEX

- Gitlab

- Google OAuth

In this blog post I will go through the steps needed to integrate vSphere 8 with Tanzu supervisor cluster with Workspace ONE Access and will create a test workload TKC cluster using a user who is authenticated by my WorkspaceONE Access instance. The user I am using to authenticate to Tanzu clusters is a domain user which is synchronised between my Active Directory and WS1 Access.

Lab Inventory

For software versions I used the following:

-

- VMware ESXi 8.0 IA

- vCenter server version 8.0 IA

- VMware WorkspaceONE Access 22.09.10

- Windows Server 2012R2 running Active Directory Service integrated with WS1 Access.

- WorkspaceONE Windows Connector

- VMware NSX 4.0.1.1

- TrueNAS 12.0-U7 used to provision NFS data stores to ESXi hosts.

- VyOS 1.4 used as lab backbone router and DHCP server.

- Ubuntu 20.04.2 LTS as DNS and internet gateway.

- Windows 10 Pro as management host for UI access.

- Ubuntu 18.04 as Linux jumpbox for running tanzu and kubectl cli.

For virtual hosts and appliances sizing I used the following specs:

-

- 3 x ESXi hosts each with 12 vCPUs, 2 x NICs and 128 GB RAM.

- vCenter server appliance with 2 vCPU and 24 GB RAM.

Deployment Workflow

- Deploy WorkspaceONE Access and WorkspaceONE Access connector and ensure that your AD users and groups are synchronised with WS1 Access successfully.

- I will not go in details on how to deploy WS1 Access and integrate it with AD, however you can check this blog post on how to do that,

- Create a Web App pointing to WCP pinniped service as OIDC client.

- Add WorkspaceONE Access as an external identity provider for vSphere with Tanzu supervisor cluster.

- Create a namespace in vSphere and assign user and groups from WS1 identity provider.

- Install Tanzu cli and connect to vSphere with Tanzu supervisor cluster using an OIDC user from WS1 Access and obtain access token.

- Create a workload TKC cluster using OIDC user.

Preparing WorkspaceONE Access as an OIDC Identity Provider

Step 1: Ensure that your Directory Service is successfully synced with WS1 Access

Login to your WS1 Access admin console then navigate to Integrations > Connectors and ensure that your WS1 connector installed in an Active Directory DC is synced and connected successfully to your WS1 Access:

Also check that your AD directory is syncing successfully with WS1 by clicking on Directories (on left pane)

You should be able to list the user groups

Step 2: Adding vSphere with Tanzu Supervisor cluster as an OIDC Web App

vSphere with Tanzu supervisor cluster needs to be added as an OIDC (OpenID Connect) client to WS1 Access, to do this navigate to Resources > Web Apps and click on NEW to create a new Web App

Assign a Name to your new OIDC Web App and click NEXT

The below values are very important and will be explaining them one by one to understand what you are doing

Authentication Type: For our tKGS integration we need to select OpenID Connect as Authentication type from the available list

Target URL: By definition this is the remote web application URL to which WS1 access should be sending users to, however this is not used in our scenario and you can set it as the Redirect URL which we will talk about next.

Redirect URL: This is very important, since this is the URL which will be used by the pinniped supervisor pods running in our TKGS supervisor cluster and WS1 will be redirecting and generating authentication tokens to that URL. TKGS Supervisor acts as an OAuth 2.0 client to the external identity provider. The Supervisor Callback URL is the redirect URL used to configure the external IDP. The Callback URL can be retrieved from vCenter by navigating to “Workload Management” > “Supervisors“

Click on the name of the Supervisor (in my lab this is called chocopasta) navigate to Configure and click on Identity Providers and copy the Callback URL shown and paste it back in WS1 Access Web App creation wizard.

Client ID: This is a user defined identifier for the OIDC client (supervisor cluster) I used pinniped-supervisor.

Client Secret: This a shared secret that pinniped supervisor cluster will use to authenticate to WS1 Access, to generate a secure value (make sure to NOT base64 encode it) from a Linux machine just use the following command to generate a random hexa string

openssl rand -hex 32

Make sure to disable both “Open in Workspace ONE Web” and “Show in User Portal” and then Click on NEXT and choose which Access policy should be applied on this Web App, I just used the default access policy which is good enough in a testbed environment.

Click NEXT and then choose SAVE & ASSIGN to assign this web app to a user and group. Important: Make sure to assign this web app to the domain user as well as groups he/she member of.

This is how my user and group web app assignments look like:

Step 3: Configure token TTLs and scopes for pinniped-supervisor OIDC client

Last step in WS1 Access console to configure some parameters for our OIDC client we just created, navigate to Settings > Remote App Access and click on your OIDC client name

This will redirect you to the OAuth 2 Client configuration page, click on EDIT next to CLIENT CONFIGURATION

Make sure to un-check “Prompt users for access” so that you do not need to manually approve authentication request coming from vSphere pinniped supervisor to acquire a token. You can also adjust the access token TTLs as per your environment requirements, click Save and then click on EDIT next to SCOPE

For our TKGS supervisor cluster, we need to make sure that we select Email and Group in the scope as this is the authentication info that Tanzu will be requesting during authentication to supervisor and workload clusters later. Click Save.

At this stage we have finalised our WS1 Access OIDC client configuration and we are ready to configure Identity Provider setting on TKGS supervisor cluster in vCenter.

Configure WS1 Access as Identity Provider for TKGS Supervisor Cluster

Step 1: Add WS1 Access as Identity Provider for Supervisor Cluster

In vCenter UI navigate to “Workload Management” > “Supervisors” Click on the name of the Supervisor (in my lab this is called chocopasta) navigate to Configure and click on Identity Providers and then ADD Identity Provider, in Provider Configuration add a name for your Identity Provider, issuer URL, username and group claims (explanation of those values under screenshot)

Provider Name: This is just a name by which the IdP will be identified in vCenter.

Issuer URL: this is tricky since for every IdP (Identity Provider) their is a different URL suffix that you need to use for OAuth2.0 client requests, for WS1 Access it is https://<WS1 Access FQDN>/SAAS/auth

Username Claim: here I am using “email” string which is means that I need to provide my OIDC user authentication username in an email format.

Groups Claim: using “group_names” as string means that during OIDC authentication process group membership is also claimed and will be used to identify user group membership.

Once you have defined the above values, click NEXT, this will take you to the next step where you need to define OAuth2.0 client ID (which we created earlier in WS1 Access web app) which is pinniped-supervisor in my homelab. In addition, you also need to paste the client secret we generated and used earlier in WS1 web app configuration.

Click NEXT, you then need to add “group, email” to the scope of the request since we are authenticating using email and we need group membership information. For the Certificate Authority you need the WS1 Access CA, you can simply https to your WS1 Access using FQDN and download its CA certificate using your web browser

Click NEXT, revise configuration and if all is good click FINISH

Step 2: Create a vSphere with Tanzu namespace and assign user permissions from WS1 Access IdP

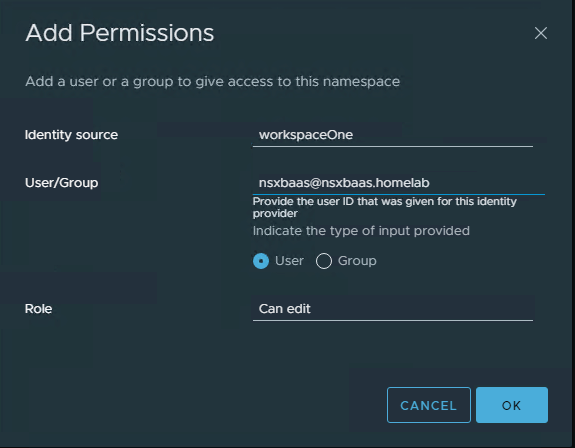

Next, we need to assign our domain user (nsxbaas@nsxbaas.homelab) to a namespace under Workload Management in vCenter. From vCenter UI navigate to Workload Management and under Namespace either create a new space or click on an existing one. In my setup, I will use an existing namespace which I created for this post called “idp“

Click on the namespace, navigate to the Permissions tab and starting adding your domain users and corresponding groups by clicking on ADD. Make sure to choose your identity provider you created in step 1 before you start adding your users and groups

Repeat this to add some groups to which this user is member of

Your Namespace permissions should look similar to the below

Step 3: Login to vSphere supervisor cluster and deploy a test TKC cluster using domain user

Last step is to login to our vSphere supervisor cluster using an authentication token which is generated by WS1 Access and use a domain user (nsxbaas@nsxbaas.homelab) to create a workload TKC. For this you will need to install Tanzu cli on a jumpbox, in my lab I am using an Ubuntu 18.04 machine. For more details on how to install Tanzu cli you can reference VMware documentations.

From my Ubuntu Jumpbox I will run the following command:

tanzu login --endpoint https://172.10.110.2 --name nsxbaas@nsxbaas.homelab

Where 172.10.110.2 is the IP of my supervisor cluster and nsxbaas@nsxbaas.homelab is the OIDC user which I want to authenticate against WS1 Access (our cluster identity provider) with. The below screenshot is a bit different than what you will get because in my lab I have already created nsxbaas@nsxbaas.homelab Tanzu server instance before so I can just re-login to it

As you can see, pinniped supervisor returned a URL which you need to copy and paste in your browser in order to acquire the above authorisation token, so I copied and pasted the above URL and below is what was returned from my WS1 Access:

The above is the access token which is valid for 5 hours (as I configured it under the remote app access settings in WS1 Access earlier in this post).

Once our login is successful I will deploy a test TKC cluster using the above user, for this I am going to use the following YAML file:

apiVersion: cluster.x-k8s.io/v1beta1

kind: Cluster

#define the cluster

metadata:

#user-defined name of the cluster; string

name: tkc-w-idp

#kubernetes namespace for the cluster; string

namespace: idp

#define the desired state of cluster

spec:

#specify the cluster network; required, there is no default

clusterNetwork:

#network ranges from which service VIPs are allocated

services:

#ranges of network addresses; string array

#CAUTION: must not overlap with Supervisor

cidrBlocks: ["198.51.100.0/12"]

#network ranges from which Pod networks are allocated

pods:

#ranges of network addresses; string array

#CAUTION: must not overlap with Supervisor

cidrBlocks: ["192.0.2.0/16"]

#domain name for services; string

serviceDomain: "cluster.local"

#specify the topology for the cluster

topology:

#name of the ClusterClass object to derive the topology

class: tanzukubernetescluster

#kubernetes version of the cluster; format is TKR NAME

version: v1.23.8---vmware.2-tkg.2-zshippable

#describe the cluster control plane

controlPlane:

#number of control plane nodes; integer 1 or 3

replicas: 1

#describe the cluster worker nodes

workers:

#specifies parameters for a set of worker nodes in the topology

machineDeployments:

#node pool class used to create the set of worker nodes

- class: node-pool

#user-defined name of the node pool; string

name: node-pool-1

#number of worker nodes in this pool; integer 0 or more

replicas: 1

#customize the cluster

variables:

#virtual machine class type and size for cluster nodes

- name: vmClass

value: best-effort-small

#persistent storage class for cluster nodes

- name: storageClass

value: k8s-storage

# default storageclass for control plane and worker node pools

- name: defaultStorageClass

value: k8s-storage

I then applied the above cluster creation file

After the above TKC cluster is created you can see that the control plane and worker nodes are up and running successfully

Hope you have found this post useful.

Pingback: Configure TKG Clusters Authentication and RBAC using WS1 Access - nsxbaas