Overview

vSphere Availability zones were introduced in vSphere 8 to provide high availability for Tanzu Workloads across clusters. Clusters are mapped to zones and they do not have to be co-located in the same physical datacenter however they must be under the same logical datacenter construct with latency between sites not exceeding 100ms. This provides failover and resiliency to localised hardware and software failures. When a zone, or vSphere cluster goes offline, the Supervisor detects the failure and restarts workloads on another vSphere zone. You can use vSphere Zones in environments that span distances so long as latency maximums are not exceeded.

Last year I discussed the same topic based on NSX networking and NSX standard load balancers (check blog post HERE and HERE) however implementing the same solution based on DVS networking and NSX Advanced Load Balancer (Avi) adds some challenges in terms of availability zones awareness and how NSX ALB service engines should be distributed/deployed so that in events of site/cluster failures one can ensure that there is always a “surviving” service engine in a site which is up so that Tanzu workloads from the failed site are switched over to another site and still accessible.

The challenge I am trying to solve: NSX ALB (Avi) when deployed as load balancer provider for vSphere with Tanzu (TKGS) is usually deployed in write-access mode, which is means that NSX ALB controller will have write access rights to vCenter and will be deploying service engines on any host/cluster so there is a big chance that two service engines might end up in the same cluster and if you are unlucky enough, if this cluster fails then your Tanzu cluster (when they are switched over to a surviving site) will not be accessible since both service engines (load balancer) will be down.

The above challenge can be solved using the following:

- Service Engines must be deployed manually by vCenter admin, which means NSX ALB controller needs to connect to vCenter cloud in No Access mode (no orchestrator).

- vCenter admins need to ensure that service engines are deployed in two separate clusters and must make sure that they always stay that way and never end up in the same cluster. This is achieved by using VM to Host “Must” affinity rules.

Reference Architecture

The below screenshot is how vSphere zones are deployed and eventually multi-cluster Tanzu workload clusters across three availability zones.

The following table is cluster/zone definitions of the setup I used in this blog post

Lab Inventory

For software versions I used the following:

-

- VMware ESXi 8.0U1

- vCenter server version 8.0U1

- VMware NSX ALB (Avi) 22.1.3

- TrueNAS 12.0-U7 used to provision NFS data stores to ESXi hosts.

- VyOS 1.3 used as lab backbone router and DHCP server.

- Windows Server 2019 as DNS server.

- Windows 10 pro as management host for UI access.

For virtual hosts and appliances sizing I used the following specs:

-

- 6 x ESXi hosts each with 12 vCPUs, 2 x NICs and 128 GB RAM.

- vCenter server appliance with 2 vCPU and 24 GB RAM.

Deployment Workflow

- Create vSphere availability zones and zonal storage policy.

- Configure NSX ALB (Avi) in no orchestrator cloud and manually Install NSX ALB Service Engines.

- Enable Zonal Workload Management along with zonal Namespace and zonal workload Tanzu Cluster.

- Configure Affinity Rules for service engines per cluster placement.

- Install AKO, deploy testing application and configure Ingress.

- Simulate a disaster recovery scenario and verify HA zones operation.

Create vSphere availability zones and zonal storage policy

At first, we need to create 3 availability zones corresponding to the 3 clusters we have, from vCenter UI click on the vCenter server name on top left of inventory list > Configure > vSphere Zones from the left pane and click on the “+” sign to start adding vSphere zones, one for every cluster you have. In my case I have 3 zones with 3 clusters as shown earlier

As part of workload management enablement (i.e. deploying supervisor cluster for TKGS) we need to define a storage policy, which will be used by Tanzu to identify which data stores should be used to store TKC volumes. In vSphere 8 zones, VMware identified a new storage policy type called zonal storage policy, which defines storage policies across availability zones. This is the type we need to create in order to be able to use our data stores with multi-zonal Tanzu cluster. In order to create zonal storage policies the following requirements must be met:

- No data store sharing across sites (clusters) which means every cluster need to have its own data stores.

- Data stores that to be used as zonal storage (which zonal storage policy will apply it) need to be tagged and these tag needs to be used during creating the zonal storage policy so that the zonal storage policy can identify the compatible data stores that can be used.

Below screenshots show my 3 clusters (zones) each with a dedicated data store that will be used for the zonal storage policy that I am going to create

To create zonal storage policy we need first to tag each data store to be used by a tag, in my setup I created a tag category called storage and defined a tag k8s-storage which I will assign to every data store highlighted above. To create a new category and tag, navigate to data stores and click on ACTIONS > Tags & Custom Attributes > Assign Tag

Then the Create Tag window will pop-up, click on “Create New Category” to create category storage and then create tag k8s-storage under that category as shown below

Then click on the zonal data stores and from the right pane click on ASSIGN under Tags and then assign tag k8s-storage for the 3 data stores we have

Repeat the above steps for all 3 data stores. Once all tags are assigned it is then time to create our zonal storage policy, from vCenter UI navigate to Policies and Profiles > VM Storage Policies and click on CREATE

Assign a name for your storage policy and then click NEXT

Choose Enable tag based placement rules and Enable consumption domain options, this instructs vSphere that we are creating a zonal storage policy

Click NEXT then choose storage from the tag category and choose k8s-storage tag which the storage policy will be matching against

Click NEXT, leave Storage topology type as zonal

Click NEXT, you should see list of compatible data stores showing our data stores which we tagged earlier with tag k8s-storage

Click NEXT and revise the parameters and then click FINISH

Our availability zones and zonal storage policy are now created.

Configure NSX ALB (Avi) in No Orchestrator Cloud and Deploy Service Engines

Login in to your NSX ALB UI and navigate to Infrastructure > Clouds the default cloud is by default of type No Orchestrator and since we need to use the Default-Cloud for Enabling Workload Management in vSphere then we do not need to change default cloud, just we need to download the OVA of the service engines that we will be manually deploying across our availability zones. To download service engine OVA, just click on the small circle icon to the right of the default cloud and choose image type OVA.

NSX ALB will compose service engine OVA and once ready it will be downloaded to your local machine.

Once downloaded, we need to deploy service engine OVA in zone01 and zone02 so in total two service engines. From vCenter UI, right click any of the clusters and choose Deploy OVF Template and follow the steps to deploy the service engines

NSX ALB service engines have 10 network interfaces, the first one is the management through which NSX ALB controller will be managing the service engine, the other 9 NICs are for data interfaces.

Before moving to the next step in the OVF deployment workflow, we need to generate an authentication token from NSX ALB controller cloud. This authentication token will be used by service engine to connect to ALB controller and its valid for one hour only. If you have multiple ALB controller clusters then you need to also specify cluster UUID to service engine.

Copy and paste the above authentication token in the field for that in the below screenshot and fill out the rest of the configuration parameters. Note, in the screenshot I specified management IP configuration, however later on I decided to use DHCP for all my service engines interfaces. DHCP will be used in the rest of this blog post for all service engine interfaces (both management and data interfaces).

Review the below details and if all is good then click on FINISH to start service engine deployment.

Repeat the same process to deploy a second service engine. If all is good then you should see service engines popping up in NSX ALB UI under Infrastructure > Cloud Resources > Service Engines with their management IPs, and no you cannot change how service engine names are displayed in NSX ALB UI.

Note: if you use NSX ALB controller version which is patched (for example 22.1.32p4) service engine registration might take a bit longer, since the OVA image composed by NSX ALB controller are always based to base image release (i.e. 22.1.3) and once the are deployed, NSX ALB controller will perform an upgrade for service engines and hence the longer time to register.

Configure Service Engines from NSX ALB Controller

Next we need to configure and prepare the two deployed service engines from NSX ALB controller so that they can host virtual services. From NSX ALB UI navigate to Infrastructure > Cloud Resources > Service Engine and click on the small pen on the right of one of the service engines

In the below screenshot you can see that I set DHCP as the IP address management scheme for all my service engine data interfaces. If you are using static IP address assignment then you need to note the mac address of the interface listed here to the ones in vCenter to ensure that you are setting the correct IP address on the correct service engine interface and the corresponding port group. This is not needed in WRITE-ACCESS cloud mode since in that mode NSX ALB controller has access to vCenter and can retrieve such information, however this is not the case when your NSX ALB controller is operating in No Orchestrator (no Access) mode.

Click on Save. Next, we need to configure a “usable” network for NSX ALB in order to use it to place VIPs of virtual services. VIPs cannot be assigned by DHCP and hence we need to create a static IP pool for VIP assignment. Remember, our NSX ALB controller does not have access to vCenter and hence cannot retrieve any networks or port groups, so we need to manually create a useable network and define a static IP pool under that network that will be used for VIP placement.

From ALB controller UI, navigate to Infrastructure > Cloud Resources > Networks and click on CREATE

I created a network called se-data-vips with DHCP as IP address management scheme and then added subnet 192.168.26.0/24 with a defined IP Pool range for VIPs IP assignment

Create IPAM and finalise NSX ALB Controller Configuration

As last step in ALB configuration is to create an IPAM profile and add it to the Default-Cloud, this is needed so that ALB knows which useable networks it can use to place VIPs that will be created for eventual virtual services. From ALB UI navigate to Templates > IPAM/DNS Profiles and click on CREATE, below is my IPAM profile configuration

Save the IPAM configuration then navigate to Infrastructure > Clouds > Default-Cloud and click on the pencil icon to the right of the Default-Cloud to edit cloud parameters and add the IPAM we just created

Click SAVE, our NSX ALB and service engines are now ready to be used.

Enable Zonal Workload Management & Create Zonal Namespace

In this section, we need to enable workload management on our 3 zones, which will result in creating a stretched supervisor cluster across the 3 zones/sites. During the process we will provide NSX ALB credentials so that vCenter can connect to it and make use of NSX ALB to provision cluster-api address which will be used to access the supervisor cluster. This address will be assigned from the static IP pool we created for VIPs earlier under network se-data-vips (192.168.26.0/24).

From vCenter UI, click on top left of screen and click on Workload Management

You should see the below screen

Click on GET STARTED and in the following screen choose vSphere Distributed Switch as networking layer

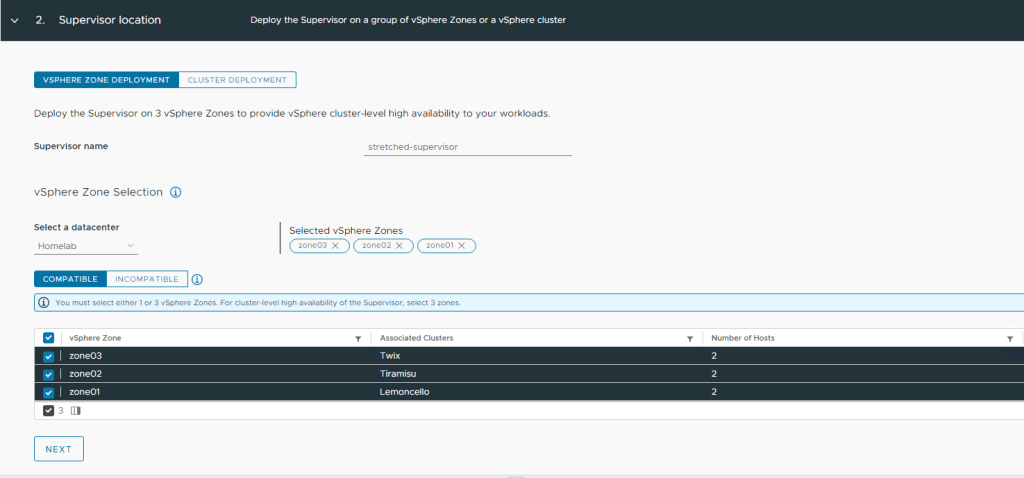

In Step 2 we need to choose our 3 availability zones that we defined earlier to deploy the stretched supervisor cluster on top of them

Next is the storage policy selection, however the below screenshot has a different storage policy than the one I created earlier in this post, however the concept is the same

After that you need to set your NSX ALB parameters

Step 5 is your Ip configuration for supervisor VMs

Step 6 is your workload network, this is where Tanzu worker nodes VMs will be getting IP configuration assigned

Choose the form factor of your supervisor VMs and then click FINISH

After the deployment is done, your supervisor cluster should acquire an IP from NSX ALB se-data-vips IP pool we assigned earlier for VIPs. I then created a namespace called stretched-homelab with a workload Tanzu cluster to test, below is how my setup eventually looked like and the deployment YAML I used to deploy the zonal workload Tanzu cluster

apiVersion: cluster.x-k8s.io/v1beta1

kind: Cluster

metadata:

name: zonal-cluster01

namespace: stretched-homelab

spec:

clusterNetwork:

services:

cidrBlocks: ["198.51.100.0/12"]

pods:

cidrBlocks: ["192.0.2.0/16"]

serviceDomain: "cluster.local"

topology:

class: tanzukubernetescluster

version: v1.23.8+vmware.2-tkg.2-zshippable

controlPlane:

replicas: 3

workers:

machineDeployments:

- class: node-pool

name: node-pool-1

replicas: 2

#failure domain the machines will be created in

#maps to a vSphere Zone; name must match exactly

failureDomain: zone01

- class: node-pool

name: node-pool-2

replicas: 2

failureDomain: zone02

- class: node-pool

name: node-pool-3

replicas: 2

failureDomain: zone03

variables:

- name: vmClass

value: best-effort-medium

- name: storageClass

value: zonal-sp

Configure Affinity Rules for service engines per cluster placement

To finalise availability zones setup for our multi-zone supervisor and workload Tanzu clusters, I will be configuring VM/Host affinity rules to ensure that my service engines keep running under the same clusters/zones where they were created and never moves. Again, this is to ensure that service engines will never be running in same cluster/zone which can compromise HA in scenarios of site failures.

Configuring VM/Host affinity is nothing special for Tanzu nor for availability zones and detailed config steps with explanation can be found in VMware documentation, but for sake of convenience I have included screenshots of my lab setup on how I configured VM/Host affinity rules.

Choose your cluster > Configure > VM/Host Groups and create a host group with the hosts that you want to restrict service engine to, create a VM group containing your service engine(s) then assign a “Must run on” rule for both Host and VM groups you created, detailed screenshots are added below.

Repeat the same for service engine(s) running on zone02.

Install AKO, deploy testing application and Configure Ingress

Usually, customers who are using NSX ALB as layer 4 load balancer for their Tanzu workloads, they also use it for Layer 7 Ingress needs of their containerised applications. The idea of this section to create an Ingress resource for a testing microservices application I deployed on my multi-zone workload cluster, then simulate a site failure and test if access to my application via that Ingress will be available or not.

For this step, I deployed AKO inside my multi-zone cluster and a microservices application that I downloaded from Github along with an Ingress resource. This blog post is long enough so I will not be adding the details of how I deployed those components, however it is all detailed and documented in one of my previous blog posts HERE.

From my jumpbox I logged in to my multi-zone workload cluster and verified that my Ingress has an IP address assigned from VIPs pool

From NSX ALB UI, I can also see that Ingress is mapped to a virtual service which is backed by all the 6 worker nodes (control-plane nodes are displayed because I have AKO configured with service type NodePort) in my multi-zone cluster

Simulate Disaster Recovery Scenario and Verify HA Zones Operation

Last step is to verify that vSphere availability zones will provide us with the expected HA for our multi-zonal Tanzu workloads. The test is simple, I will bring down the whole site which hosts my frontend containers which are running my application homepage and is accessible using Ingress host address (see section above) http://onlineshop.zonal.nsxbaas.homelab

Lets us first check which nodes are hosting my frontend pods

The above highlighted worker node is under cluster Twix (zone03) see below screenshot

So in order to simulate a failure scenario, I will power off both hosts esx04 and esx06 and will see if I an still access my application on http://onlineshop.zonal.nsxbaas.homelab or not. Below screenshot is after I powered down both hosts:

Also from command line we can see that control-plane and 2 worker nodes are in NotReady state

You can notice that pods that were running on failed nodes have entered Terminating state while new Pods are being spun up on nodes under other zones

From a web browser, I can also access my application successfully after DR event has been completed

You can also see that ALB has picked up the failure scenario but the layer 7 VS for Ingress is still up after switchover event

Hope you have found this blog post useful!

Pingback: Enabling Antrea NodePortLocal in Single and Multi-Zonal TKGS Clusters - nsxbaas

Hello,

Article is excellent! Thanks for that 🙂

I have a quick question related to Tanzu clusters, specifically in a scenario with two physical data centers (DCs). If, for instance, zones 1 and 2 in DC1 experience a failure, but zone 3 in DC2 remains unaffected, does this mean that the two supervisor control plane VMs go down as well, rendering the entire Tanzu cluster inaccessible? I’m curious about the resilience aspects in such a situation and how the system handles the loss of zones across different data centers.

Great article!

One thing> I don’t get why you created the Host/VM rules. Since HA works within the same cluster, the SEs would never move between the clusters.